ORCA Benchmark Reveals How AI’s Core Design Makes It Unreliable for Everyday Math

PR Newswire

KRAKÓW, Poland, Nov. 5, 2025

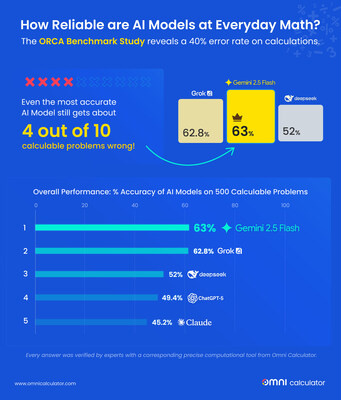

AIs Fail Nearly 40% of Everyday Math Problems, Study Reveals

KRAKÓW, Poland, Nov. 5, 2025 /PRNewswire/ — Omni Calculator today released the findings of the ORCA (Omni Research on Calculation in AI) Benchmark , a comprehensive study evaluating leading AI chatbots on everyday math. The results are stark: users have a significant chance of receiving a wrong answer for calculable tasks , ranging from splitting a bill to projecting investment returns.

After testing five leading models on 500 real-world problems , the benchmark found that no model scored above 63% accuracy . The top performer, Gemini 2.5 Flash, still gets nearly 4 out of 10 problems incorrect . The most common failures are not in advanced logic, but in simple arithmetic, rounding errors, or applying the wrong formula.

According to the study’s lead author, Joanna Śmietańska-Nowak, a PhD in Physics with a postgraduate degree in Machine Learning, this inconsistency stems from a fundamental architectural mismatch.

“When we talk about mathematical calculations in AIs, it’s important to remember that they don’t really operate like calculators. AIs are pattern recognizers trained on text , not symbolic reasoners built to perform arithmetic,” Śmietańska-Nowak explained. “They learn statistical associations between tokens rather than mathematical rules, which is why they produce answers that sound logical but are numerically wrong. “

Key Findings:

- None of the five models (namely ChatGPT-5, Gemini 2.5 Flash, Claude 4.5 Sonnet, DeepSeek V3.2, and Grok-4) scored higher than 63% in overall performance.

- 68% of errors were mechanical mistakes — calculation errors (33%) and precision/rounding issues (35% ).

- Performance varied wildly. In the domain of Finance & Economics, accuracy ranged from 70% to 80% for some models to below 40% for others.

- AIs struggled most in domains like Physics and Health & Sports , where translating a real-world scenario into a formula is required.

The Bottom Line for Users: Double check AI calculations

The ORCA Benchmark highlights a critical rule: Always double-check the numbers . For any task with real-world consequences — such as a loan calculation, a medical dosage, or a recipe conversion — any AI’s output must be verified using a dedicated calculator or tool .

Download the Full Omni Calculator AI benchmark Here: https://www.omnicalculator.com/reports/omni-research-on-calculation-in-ai-benchmark

About Omni Calculator:

Omni Calculator has been building tools to solve real-world problems for over a decade. Our team of experts creates reliable calculators that empower people to make informed decisions. The ORCA Benchmark is a natural extension of our mission to bring clarity and accuracy to everyday calculations.

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/orca-benchmark-reveals-how-ais-core-design-makes-it-unreliable-for-everyday-math-302605415.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/orca-benchmark-reveals-how-ais-core-design-makes-it-unreliable-for-everyday-math-302605415.html

SOURCE Omni Calculator